The freezing on each color channel is caused by the frame hold control mod on each camera.

|

Another live color test. The resulting image is somewhat clearer here than in the last live test. The key to getting the most from pixelvision cameras, lie with the control you have over a versatile, even light source. This means less work for the pxl-2000's sensor.

The freezing on each color channel is caused by the frame hold control mod on each camera.

Thanks to Paul Arcus for standing in for the test and whipping up a tripod / camera rig!

0 Comments

Here's a short video demonstrating Trixelvision's basic setup.

Finally got around to firing up the system today. One of the composite to component converters wasn't registering it's composite input signal, which meant that the final image was missing a color channel. I tried a number of solutions - rebooting the unit, swapping the composite signal with each of the other two cameras, bypassing the time base corrector, and swapping the mode of the device to YCbCr instead of RGsB in the hope of producing any image. Unfortunately none of these solutions worked, which led me to believe the unit is faulty.

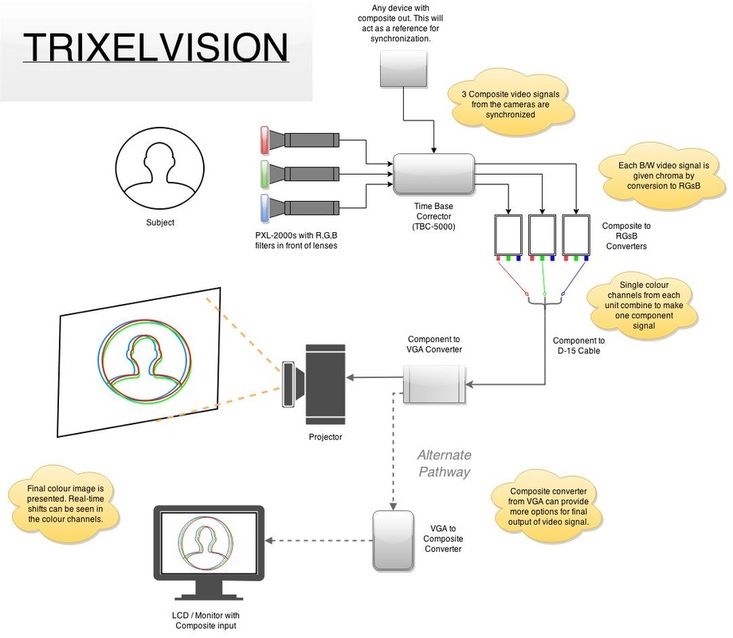

After several reboots of the device, and reconnections of the composite signal, the converter decided to finally detect the input. I'm not sure what causes this bug or how to avoid it, but after some testing since, it appears that given enough time during the setup of Trixelvision, the unit will eventually function of it's own accord. Anyway, here is a small rudimentary sample video of Trixelvision performing in it's first live tests. As mundane as these tests may appear, the purpose of this video is to demonstrate the Trixelvision in it's first live operational state. The fundamentals shown here will pave the way for more creative experimental examples which I will post in the near future. Before I get stuck into the final phase of testing, I figured it would be good to simply lay out a basic schematic of what's going on in the system in case anyone is curious to know how it is supposed to come together.

As always, please forgive my lack of correct terminology and engineering standards! Several months have passed since I last posted on this project. I've been traveling, and haven't had the chance to contribute much outside keeping my eyes peeled for a cheap time base corrector with multiple inputs.

Enter the Datavideo TBC-5000. A somewhat versatile unit; it has 4 BNC/S-video inputs and outputs, hot switching and PIP options, gen-lock capability, and four inbuilt time base correctors. The TBC-5000 only needs to serve one purpose with Trixelvision, and that is to synchronize the 3 video signals coming from the PXL-2000s. These machines usually retail for around $800USD, but I managed to score a fully-functioning second hand one for $300USD. $400 less than if I'd invested in three separate time base correctors and daisy chained them together instead. This is the last component I needed for the system to perform live RGB vision tests. I'll go out and buy 10 BNC to RCA converters tomorrow, and hopefully get stuck into hooking it all up!

Here's another test video I shot following the the re-installment of an infrared cut filter to the PXL-2000. Although working offline and with a single camera still provides difficulty in maintaining an even color balance and RGB alignment, you can certainly notice the difference to the last test (see previous post), where the infrared light was interfering with the color.

Note the weird light blue and pink tone in much of the image.

I shot some more non-live tests last week, then composited the vision in After Effects. I must continue to use this method before I have access to a time base corrector/s.

The toughest part of performing the tests using an offline method, isn't so much the time spent collecting the vision from the tapes and cards, then processing them in compositing software, but rather the difficult part is not having instant control over the gain on each color channel (camera). I have to rely on the naked eye to estimate an even b/w exposure of each RGB channel across the three LCD monitors, in the hope they will be close enough when I drop them into After Effects. I don't want to rely on plugins and level adjustments in After Effects to fix the color balance. Anyway, I came to a new problem following last week's test. No matter how much I tried to tweak the composited vision in After Effects, I couldn't produce anywhere near real color. The video below shows an example of what I was dealing with.

I carefully considered what factors were different in this test to the first color test I performed where the results were near perfect color images. After some deliberation, I could really only come to one conclusion: there was something about the subject's light source that had changed. In this test, I had filmed the subject in natural sunlight, where as the first test video was taken of a computer's LCD screen. LCD Screens don't produce infrared light.

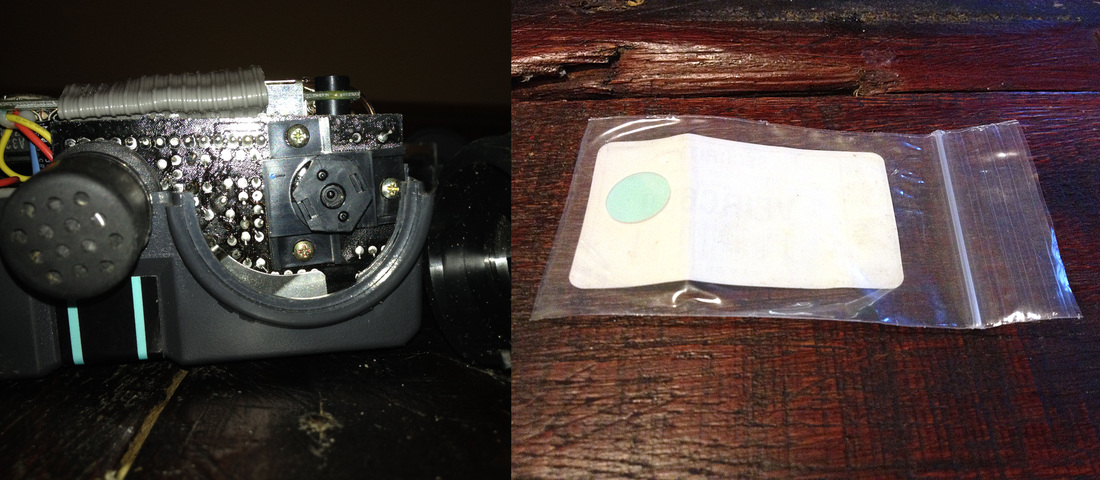

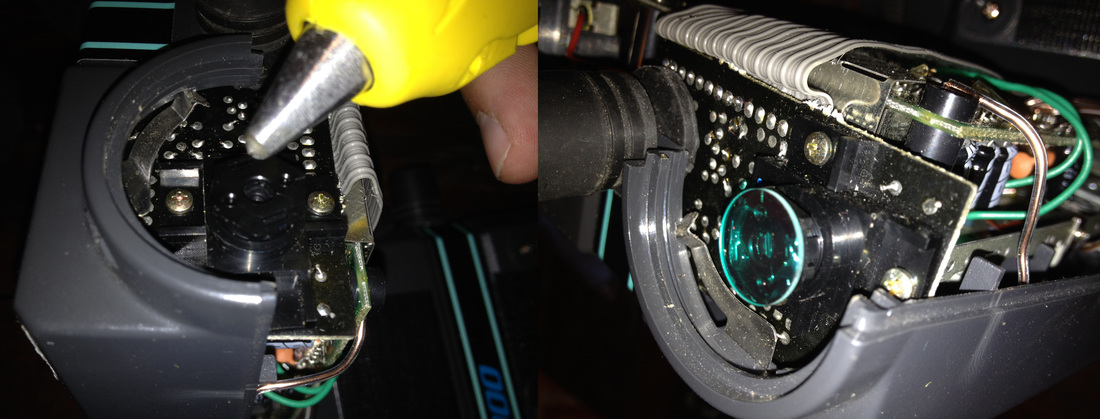

I instantly remembered back to an article I read on the PXL-2000 about the effects of a translucent 'blue-square', which is in fact a tiny infrared (IR) cut filter and sits in front of the on-board lens. PXL-2000s are so old now that these filters have become subject to corrosion, causing a hazy and foggy cloud over the recorded image. Most owners have chosen to simply remove these filters completely, rather than replace them. Opening up all three of my cameras revealed that none of them still had an IR cut filter. The removal of an IR cut filter and allowing for infrared light to pass onto the sensor, doesn't really effect the black and white video enough to notice, but when that black and white video is used to create a color image; surely it would look hideous*. Which brought me to a solution: Simply reinstall one. I marched to B&H and bought three of the smallest IR cut filters they had. 13mm filters were small enough to do the trick, and they amounted to only $5 a pop. At last, I had the cut filters ready to go. Using a glue gun, I attached the filters to the tiny lens hoods and closed up the cameras. The test that followed proved I was right by removing the skewed color balance. The IR cut filters are essential to this project.

Left image: Lens without IR cut filter. Right image: IR cut filter.

A tiny dab of glue held it in place. Had to be careful not to put glue on the lens itself.

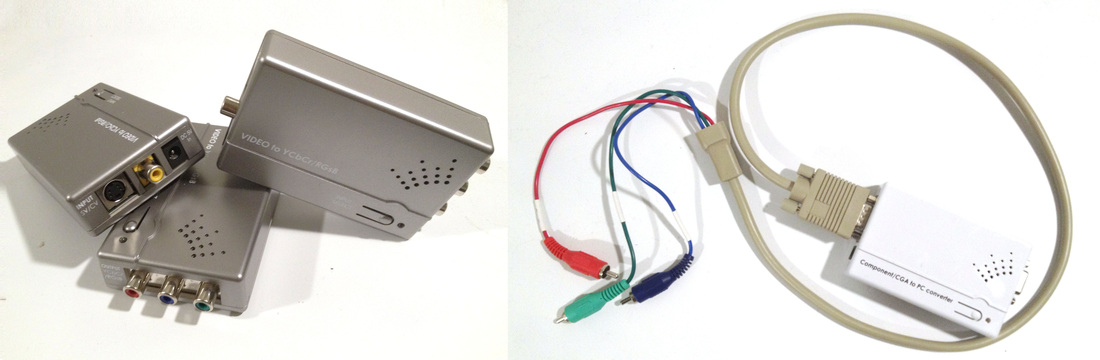

*Here's a good example of what footage looks like when you remove the IR cut filter from a color video camera. Three composite to component RGsB devices just arrived. I also ordered a component RGsB to VGA converter. These will allow for the combining of the three b/w video channels into a single colour signal.

After asking around on some online video engineering forums, I've determined it would be essential to synchronize each of my video sources before combining the converted component signals into the final output. For anyone curious to know what synchronizing is, here is my best explanation: In order to combine any live video signal, whether it be via mixing, switching, or blending using a device, all of the sources must be 'in sync'. A video signal contains tiny voltage pulses that tell the output device when to accept new information from the signal, and display an image. There are two types of sync pulses - horizontal and vertical. Think in terms of a television, which uses an electron beam to creates the image we see on our screens. We can't see it, but an image is made up of many tiny lines. The horizontal sync pulse in a video signal tells the electron beam when to draw a line from the left of the screen to the right of the screen. Once there have been enough horizontal lines drawn from top to bottom on the screen, the vertical sync pulse then tells the beam to go to the top of the screen and start again to create the next frame we see. Like all signals, these pulses are born from frequency. In basic terms, because frequency is about timing, you can't simply switch on several devices and expect their signal frequencies to be in unison. In the case of broadcast cameras, the I/O Genlock system was designed so that the camera's pulse signals could be aligned to any incoming source. This way cameras could be daisy-chained to one another, ensuring their outputs were synchronized for any mixing device taking their signals. More information about synchronization can be found here. The following short video also presents a good example of it in action. To synchronize the video sources coming from the three Pxl-2000s, I'll need to use frame synchronizing Time Base Correctors. The TBCs should allow me to synchronize to signals to a reference source. I can't see myself spending $600USD on three time base correctors right now, so I'm going to wait until a decent TBC that can take multiple inputs comes up on eBay. Unlike most conventional cameras, the PXL-2000 doesn't come with a mountable screw or base that can be attached to a tripod. In order to align all three cameras for the Trixelvision tests, I'm going to need a way to secure them evenly to the long base plate I purchased last week. In answer to the problem I've decided to improvise and slap together a couple of clamping mounts using bits I found at home depot.

'Tight-ass' somewhat being the running theme for Trixelvision; my mounts were only a couple of dollars to make. |

Archives

February 2015

Categories

All

|

RSS Feed

RSS Feed